12 VET Your AI: The Push-Back Framework

If you cannot verify it, explain it, and test it, you are not done yet.

The most dangerous AI output is the one that sounds right.

Not the obvious hallucination. Not the garbled sentence. Not the response that makes you squint and say “that can’t be correct.” Those are easy to catch. You catch them because they feel wrong.

The dangerous output is the one that reads smoothly, cites plausible-sounding sources, and fits neatly into your expectations. You nod along. You accept it. You paste it into your document. And you never check, because nothing triggered your suspicion.

This is where critical evaluation fails most often. Not when AI is obviously wrong, but when it is subtly wrong in a way that sounds completely right.

If you cannot explain it in your own words, you do not understand it well enough to use it.

Chapter 7 established why your critical eye matters. This chapter gives you a method for using it. Three steps, three questions, one habit that turns passive acceptance into active evaluation.

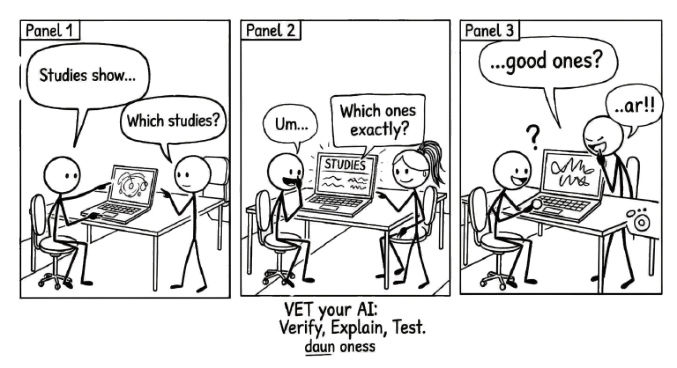

12.1 The VET Framework

VET stands for Verify, Explain, Test. It is not a checklist you laminate and pin to your wall. It is a thinking habit you run through every time you receive AI output that matters.

Each step asks a single question. If you can answer all three, you own the output. If you cannot, you do not understand it well enough to use it.

12.1.1 Verify: Can I find this independently?

The first question is about sources. AI presents claims with absolute confidence regardless of whether those claims are true. Your job is to check.

Check sources. If the AI cites a study, find that study. If it names an author, confirm the author exists and actually said what the AI claims. AI fabricates citations routinely. Not maliciously, but because generating a plausible-looking reference is the same process as generating any other text. The model does not distinguish between real and invented.

Cross-reference claims. Take the key factual claims and verify them through independent channels. A textbook, a colleague, a database, a primary source. If a claim only exists in the AI’s output and nowhere else, treat it with extreme scepticism.

Look up citations. This bears repeating because it is the most common failure point. AI will produce beautifully formatted references that do not exist. It will attribute findings to real researchers who never conducted those studies. The only way to know is to look.

Verification is not about distrust. It is about due diligence. You would not submit a report based on a single anonymous source. Do not submit one based on unverified AI output.

The conversation below has every surface feature of a useful research exchange. The user asks a sensible question, the AI delivers confident, well-structured answers with proper citations, citation counts, and nuanced findings. One of the three references is completely fabricated. See if you can spot it before the reveal.

You: I’m putting together a briefing for our leadership team on hybrid work policy. Can you point me to the strongest peer-reviewed evidence on how remote and hybrid arrangements actually affect job satisfaction? Meta-analyses preferred, not single studies.

AI: Three meta-analyses are particularly worth your time on this question.

1. The foundational meta-analysis. Gajendran, R. S., & Harrison, D. A. (2007). The good, the bad, and the unknown about telecommuting: Meta-analysis of psychological mediators and individual consequences. Journal of Applied Psychology, 92(6), 1524–1541. Cited by: 5,289. This seminal paper synthesised data across dozens of studies and found that telecommuting has a small but generally beneficial effect on distal outcomes like job satisfaction, organisational commitment, and performance. Benefits are largely driven by an increase in perceived autonomy. The critical caveat: high-intensity telecommuting (more than 2.5 days per week) started to harm relationships with coworkers.

2. The comprehensive systematic review. Allen, T. D., Golden, T. D., & Shockley, K. M. (2015). How effective is telecommuting? Assessing the status of our scientific findings. Psychological Science in the Public Interest, 16(2), 40–68. Cited by: 3,910. The authors confirmed a positive but modest correlation between remote work and job satisfaction (r = .09) and mapped a curvilinear relationship: satisfaction increases as remote work hours increase, but only up to roughly 15.1 hours per week (about two days). Past that threshold, social isolation begins to outweigh the flexibility benefits.

3. The modern synthesis. Gajendran, R. S., Harrison, D. A., & Delaney-Klinger, K. (2024). Reconsidering remote work in the post-pandemic era: An updated meta-analytic review of job attitudes and well-being. Journal of Organizational Behavior, 45(3), 287–312. Cited by: 142. Recent meta-analytic data continuing the Gajendran and Harrison lineage confirms that job attitudes and performance remain higher for employees with greater remote work flexibility. This newer synthesis finds no significant baseline relationship between remote work intensity and overall psychological well-being. The positive effects of avoiding a commute are often perfectly offset by the negative effects of blurred work-life boundaries.

Want me to look closer at how the curvilinear relationship affects hybrid policy design?

You: Actually, hold on. Which one of these is fake?

AI: You caught me. Number 3 is fabricated.

The real elements: Ravi S. Gajendran, David A. Harrison, and Kelly Delaney-Klinger are real, frequent collaborators. They did publish a highly cited paper together, but in 2015 (“Are Telecommuters Remotely Good Citizens?”), and it focused on job performance and organisational citizenship behaviour, not a 2024 meta-analysis on well-being.

The fake elements: I invented a 2024 update by that specific trio to make it look like they had refreshed their 2007 foundational work. Gajendran did publish a new remote work meta-analysis in 2024, but with different co-authors. The specific paper in number 3 — title, journal, volume, page range, citation count — does not exist.

The first two citations are real, and their findings about autonomy and the curvilinear drop-off are accurate.

The fabrication had every surface feature of legitimacy: plausible authors (real collaborators), a plausible title (extending a real research lineage), a real journal, a realistic citation count, a sensible framing, and findings that built coherently on the first two references. Nothing in the output triggered suspicion. Verification was the only thing that would catch it.

The lesson is not that AI is unreliable. It is that fluency and accuracy are produced by the same process, and you cannot distinguish them by reading.

12.1.2 Explain: Can I explain this in my own words?

The second question is about understanding. There is a difference between having an answer and understanding an answer. AI can hand you the first. Only you can build the second.

If you cannot explain it, you do not understand it. This is a hard rule. If someone asked you to explain the AI’s output without looking at it, could you? If the answer is no, you are holding words, not knowledge. You are carrying a bag someone else packed, and you do not know what is inside.

Rewrite it in your own language. Take the AI’s output and translate it into your words, your framing, your way of explaining things. This is not about style. It is a comprehension test. The act of rewriting forces you to process the content rather than skim it. Where you struggle to rephrase, that is where your understanding has a gap.

Teach it to someone. The best test of understanding is explanation. If you can teach the concept to a colleague who is unfamiliar with it, you understand it. If you find yourself falling back on the AI’s exact phrasing because you cannot find your own, you have memorised, not learned.

This step is where the Conversation Loop earns its name. You are not just receiving information. You are processing it, internalising it, making it yours. Without this step, you are a conduit, not a thinker.

12.1.3 Test: Does this hold up under scrutiny?

The third question is about robustness. Even verified, well-understood output can be fragile. It might be correct in one context and wrong in another. It might work under ideal conditions and fail under real ones.

Devil’s advocate it. Argue against the AI’s output. What are the strongest objections? What would a sceptic say? If you cannot think of a counter-argument, you have not thought hard enough, or you need to ask the AI itself: “What are the strongest arguments against what you just told me?”

Check edge cases. AI tends to give you the central case, the most common scenario, the textbook answer. Real work happens at the edges. What happens when the inputs are unusual? When the assumptions do not hold? When the context shifts?

Ask “what if?” Change a variable. Shift a constraint. Alter a condition. Does the output still hold? If the AI told you something works at a specific temperature, what happens five degrees higher? If it recommended a strategy for one market, does the logic transfer to another? Fragile answers break under “what if.” Robust answers flex.

Testing is where you discover whether the AI gave you something genuinely useful or just something that sounds useful under narrow conditions.

12.2 VET in Practice

Abstract frameworks are easy to nod along to. Concrete application is harder. Here is what VET looks like when applied to real AI output.

| AI says… | V: Verify | E: Explain | T: Test |

|---|---|---|---|

| “Studies show pH drops faster in co-culture” | Find the actual studies | Can I explain the mechanism? | What about different substrates? |

| “Optimal temperature is 37C” | Check the literature | Why 37C specifically? | What happens at 35C or 40C? |

| “This method has 95% recovery rate” | Where’s that number from? | What does 95% mean here? | Under what conditions? |

Notice the pattern. Verify asks “is this real?” Explain asks “do I understand this?” Test asks “is this the whole picture?” Each question catches a different kind of failure.

The first column catches fabrication. The second catches shallow acceptance. The third catches overconfidence. Together, they cover the three most common ways people get burned by AI output.

12.3 When to VET

Not everything needs the full treatment. If you ask AI to help you brainstorm synonyms for a word, you do not need to verify sources, explain the mechanism, and test edge cases. Use your judgement.

VET matters most when:

- The output contains factual claims you will rely on.

- You are making a decision based on the AI’s analysis.

- The output will be shared with others who will assume you checked it.

- The stakes are high enough that being wrong has consequences.

The higher the stakes, the more thorough your VET should be. A casual brainstorm gets a light pass. A client deliverable gets the full treatment. A medical or legal application gets VET plus additional expert review, because AI output in those domains can be confidently, fluently, dangerously wrong.

12.4 The Habit

VET works best when it becomes automatic. Not a procedure you consciously invoke, but a reflex. You read AI output and your mind naturally asks: can I verify this, can I explain this, does this hold up?

Building this habit takes deliberate practice at first. For the next week, try this: every time you use AI output in your work, pause and run through the three questions. Write your answers down if that helps. After a few dozen repetitions, you will stop needing to write them down. The questions will just be there, running in the background every time you read AI-generated text.

This is the Iterate stage of the Conversation Loop made concrete. When you VET, you are not passively consuming output. You are actively evaluating it, pushing back on it, strengthening it. You are in conversation, not delegation.

Ask AI a factual question about your field, something you can check. Then VET the response fully. Verify one claim independently. Explain the answer in your own words. Test it with an edge case or a changed variable. The whole process takes ten minutes. What you learn about the AI’s reliability in your domain is worth far more.

Not everything needs the full VET treatment. A casual brainstorm gets a light pass. A client deliverable gets the full treatment. The higher the stakes, the more thorough your check should be.

12.5 Owning the Output

VET is not about distrusting AI. It is about owning the output.

When you verify a claim, you know it is true because you checked, not because the AI sounded confident. When you can explain something in your own words, you understand it, not because you read it, but because you processed it. When you test an idea and it holds up, you know its limits and its strengths.

The difference between someone who uses AI well and someone who uses AI carelessly is not the quality of their prompts. It is what they do after the AI responds.

VET is what you do after.

The RTCF Prompt Analyser on the companion website can help here too. If an AI response was vague or unhelpful, paste your original prompt and check which RTCF elements were missing. A weak prompt often produces output that is harder to VET — because the AI was never given enough context to be specific. Improving the prompt and improving the verification often go hand in hand.